Professional Documents

Culture Documents

Design of VLSI Architecture For A Flexible Testbed of Artificial Neural Network For Training and Testing On FPGA

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Design of VLSI Architecture For A Flexible Testbed of Artificial Neural Network For Training and Testing On FPGA

Copyright:

Available Formats

Volume 8, Issue 5, May 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

Design of VLSI Architecture for a Flexible

Testbed of Artificial Neural Network for

Training and Testing on FPGA

Gurmeet Kaur Arora

Electronics and Electrical Communication Engineering,

Indian Institute of Technology, Kharagpur

Abstract:- General-Purpose Processors (GPP)-based computationally complex and time-consuming. Thus,

computers and Application Specific Integrated Circuits almost all of the existing FPGA designs for ANN are

(ASICs) are the typical computing platforms used to based on software-hardware co-design [17] [11] [14]

develop the back propagation (BP) algorithm-based [19].

Artificial Neural Network (ANN) systems, but these

computing devices constitute a hurdle for further A tremendous amount of parallel computing

advanced improvements due to a high requirement for operations are required by ANN architectures. Due to

sustaining a balance between performance and inherent parallelization and application-specific adaption,

flexibility. In this work, architecture for BP learning FPGAs are a realistic and affordable choice that, when

algorithm using a 16-bit fixed- point representation is compared to processor-based systems, helps in meeting the

designed for the classification of handwritten digits on a stringent speed requirements in real-time, delivers

field- programmable gate array (FPGA). The proposed advanced AI services, and protects user privacy. Current

design is directly coded and optimized for resource ANN models emphasize a static and offline training

utilization and frequency in Verilog Hardware mechanism in which the training data is pre-prepared.

Description Language (HDL) and synthesized on the Nonetheless, training ANNs dynamically and adapting

ML-605 Virtex 6 evaluation board. Experimental results the models to the local environment is in great demand

show 10 times speedup and reduced hardware utilization [16].

when compared with existing implementations from

literature. The architecture is expandable to other The goal is to provide a flexible testbed on FPGA

specifications in terms of number of layers, number of where ML designers can specify their neural network

neurons in each layer, and the activation function for architecture and fully or partially utilize the available

each neuron. The correctness of the proposed design is hardware resources.

authenticated by comparing parameters obtained A multi-layered perceptron network of 784 x 32 x

through Python code and Verilog. 10 is implemented and verified on the Xilinx Virtex 6

Keywords:- General purpose processors (GPP), ML-605 evaluation board. Using 100 out of 60,000

application specific integrated circuits (ASICs), artificial training images and 100 out of 10,000 validation images

neural network (ANN), resource utilization, hardware from the MNIST dataset, this network is trained and tested

descriptive language (HDL), field programmable gate- in Python and Verilog using the stochastic gradient

array (FPGA). descent BP algorithm, which has a learning rate of 0.125

and 10 epochs. The performance of Python and Verilog-

I. INTRODUCTION based implementations is compared in terms of both

accuracy and speed.

The biological neural networks that make up the

human brain are deeply layered. These biological neural II. BACK PROPAGATION ALGORITHM

networks are able to recognize complicated things by first

spotting basic traits and then combining them to pick Back propagation is the process of calculating the

up on complex ones. Similarly, an artificial neural error that is difference between the predicted output and

network is trained to recognize different objects by first the actual output and then propagating this error

identifying small patterns inside the object and then backwards through the network in order to revise the

integrating these simple patterns to identify complex weights of the neurons. This is accomplished by

patterns. calculating the gradient of the error with regard to the

weights of each neuron using the chain rule of calculus.

ML algorithms are generally complex and resource

hungry thus implementation of Back propagation algorithm eNi = yNi − dNi

on low power device such as FPGA is much

where, e Ni is the error signal, dNi is the desired

complicated than on GPUs or CPUs [18]. The data

output and weight correction parameter can be

processing required obtaining the requisite convergence

summarized as

and accuracy by updating each weight makes BP

IJISRT23MAY2378 www.ijisrt.com 2605

Volume 8, Issue 5, May 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

III. METHODOLOGY

A. Finite State Machine for ANN

Each image undergoes six distinct phases during the computation process. The FSM used to execute back propagation in

Verilog is illustrated in the figure 1. Table I provides a summary of each state and its corresponding function.

Fig. 1: FSM for ANN

Table 1: Summary of States and their functionality

B. Multiply-Accumulate Unit multiplication and addition. It multiplies two input values

A Multiply-Accumulate (MAC) unit is an and then adds the product to an accumulator register,

arithmetic logic unit (ALU) designed to perform two which contains the sum of all prior products computed by

mathematical operations on two sets of input values: the MAC unit.

Fig. 2: MAC Unit

IJISRT23MAY2378 www.ijisrt.com 2606

Volume 8, Issue 5, May 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

C. Implementation of a Neuron magnitude of the weighted sum to address it. As there are

A neuron is composed of a dedicated weight memory no subsequent layers for the error signals to propagate back,

and a MAC unit, which is utilized based on the neurons in the first layer do not utilize the error

operational state. The register is capable of being loaded computation block. Multiplexers use the control signals

and its value depend on the operational state, where it is generated by the FSM as their select lines.

either 1’b0 in State 0, latched in States 1 and 2, and either

latched or utilizing Weight2[counter] in State 3, 1’b0 in IV. OVERALL DESIGN

State 4, and utilizing either Weight1[counter] or latched in

State 5. The value in State 3 and State 5 relies on the A 784 x 32 x 10 architecture was constructed using

layer in which particular neuron belongs. the generate function in Verilog. The hidden layer has 32

neurons with a memory depth of 784, while the output

The output flow is determined by the Conditional layer has 10 neurons with a memory depth of 32 each. Some

Block, which either directs it to the LUT, the Weight computations reuse MAC units while others require

memory, or the error computation block as shown in additional resources to achieve a balance between

Figure 3. The Look up table (LUT) provides the performance and resource utilization. The architecture

activation value and its derivative with O(1) complexity shown in figure 4 operates as follows:

when applied to the computed weighted sum using the

Fig. 3: Architecture of a Neuron

In the first state, the MAC units of the first layer are A. Clock Cycles

active. The input pixels are multiplied by their For an arbitrary network (M x N x K x L) Clock

respective weights, and the resulting weighted sum is cycles and number of computations can be generalized

passed through LUTs to derive H and Hbar as follows: If we consider a network with dimensions

simultaneously for all 32 neurons. 784 x 32 x 10, the number of cycles required or forward

In the second state, H and Hbars are sequentially propagation is 816, which can be generalized as

multiplied by the output layer’s weights, commencing M+N+K. For back propagation, the number of cycles

from 0 to 31. The resulting weighted sum is then fed required is 848, which can be generalized as K +

through LUTs to derive O and Obar for each of the 10 (K+N) + (N+M). Therefore, the total number of cycles

output neurons. At the end of this state, the error2 in required is M+N+K+ K+ (N+K)+(N+M).

the output layer is evaluated using 10 readily available

subtractors, and delta2 value is determined using 10 B. Computational Units

multipliers. These operations are combinatorial and do In a 784 x 32 x 10 network, there are 42 MAC units,

not require any additional clock cycle. 10 subtractors, one adder with ten operands, 42

In the third state, output layer registers are serially loaded multipliers, and 42 LUTs. If we generalise for a network

with weights from weight2 memory. This enables the with dimensions M x N x K x L, the required number of

learning rate, delta2, and H product to be added to the MAC units, multipliers, and LUTs would be N+K+L,

weight, and the weight is then written back to the required number of subtractors would be L, and the

memory. required number of adders with N operands would be

To calculate the hidden layer error in state 4, multiply one, assuming N is greater than K and L.

delta2 and Weights in sequence. Error1 for the present The process of training and testing of MNIST dataset

counter value is the sum of all of these partial products in begins by loading the weight memory and input memory

a single cycle. To accomplish this, an adder with 10 and initializing the values of parameters such as the number

operands is required. At the end of this state, delta1 of hidden layers, neurons in each layer, activation function

values for all 32 neurons are calculated using the for each neuron, and number of epochs. The next step is

available error1 values. to load an image to be trained and perform forward

Within state 5, the input pixel is multiplied by the propagation. The weights are then updated, followed by an

shifted delta1 value, and in one cycle, 32 weights in error calculation. This process is repeated from loading the

the hidden layer are updated. This step is similar to image to be trained to weight updating 100 training

step 3 for weight updation of output layer. images.

IJISRT23MAY2378 www.ijisrt.com 2607

Volume 8, Issue 5, May 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

Once the training process is complete, the next accuracy is incremented. This process is repeated from

step is to load an image to be tested and perform loading the image to be tested to comparing the output with

forward propagation. The output is then compared with the desired output for 100 testing images. The whole

the desired output, and if it matches, the value of process constitutes one epoch.

Fig. 4: ANN Architecture for 784 x 32 x 10 network for training and testing of MNIST Dataset

V. RESULTS AND COMPARATIVE ANALYSIS

Figure 5 shows the simulation results on Xilinx ISE 14.7 for th e training and testing of the MNIST dataset for 100

images.

Fig. 5: Simulation results on Xilinx ISE 14.7 for training and testing of MNIST dataset for 100 images

Fig. 6: Accuracy Comparison on different Platforms.

IJISRT23MAY2378 www.ijisrt.com 2608

Volume 8, Issue 5, May 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

The Timing report obtained after synthesizing the be 0.4403ms for 100 images. In contrast, the software-

design on Virtex 6 FPGA. The minimum clock period based implementation of the ANN requires approximately

required for our design is 1.774 ns, and based on this 4ms per epoch.

value, we estimated the time required for one epoch to

The results of the timing analysis in hardware The proposed hardware-based implementation is

and software are tabulated in the table II, which shows approximately 10 times than the software-based

that the hardware implementation is roughly 10 times implementation while sacrificing some accuracy. However,

faster than the software-based implementation. Speedup the results obtained show that the hardware-based

achieved is 4/0.4403 = 9.08 or approximately 10. implementation is a viable solution for applications where

fast processing times are essential.

Table 2: Timing Comparison on different Platforms

Table III and table IV and presents a comparison between the proposed design and an existing implementation in terms of

speed, resource and other parameters. The results indicate that the proposed design outperforms the existing design in terms of

speed, flexibility, power and resource utilization.

Table 3: Comparison of Proposed design with High Level Synthesis based architectures from literature

Furthermore, it is worth noting that the proposed design is more efficient than the existing design, which only

implements the forward propagation part in hardware as shown in table V.

Table 4: Comparison of Proposed design with RTL designed based architectures from literature

IJISRT23MAY2378 www.ijisrt.com 2609

Volume 8, Issue 5, May 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

Table 5: Comparison of Hardware requirements for forward propagation

It shows that roughly 0.2%, 2.76%, 20% of the REFERENCES

hardware of Non-pipelined, Fully-pipelined and 8-stage

pipelined, respectively is utilized in our design when [1.] Nuzula Afianah, Agfianto Eko Putra, and Andi

compared with architecture 784 x 12 x 10 of Dharmawan. High-level synthesize of

r e f e r e n c e [19]. backpropagation artificial neural network

algorithm on the fpga. In 2019 5th International

VI. CONCLUSION conference on science and technology (ICST),

volume 1, pages 1–5. IEEE, 2019.

This work presents a novel FPGA-based [2.] Ramón J Aliaga, Rafael Gadea, Ricardo J

implementation of an artificial neural network that offers Colom, José M Monzó, Christoph W Lerche,

reconfigurability in terms of the number of layers, and Jorge D Mart´ınez. System-on-chip

neurons, and activation functions for each layer. The implementa- tion of neural network training on

implementation provides faster computation speed than fpga. International Journal On Advances in

software-based implementation, but accuracy is com- Systems and Measurements Volume 2, Number

promised due to fixed-point computation. The design 1, 2009, 2009.

also outperforms pre- existing hardware-based [3.] Song Bo, Kensuke Kawakami, Koji Nakano, and

implementations in terms of frequency and resource Yasuaki Ito. An rsa en- cryption hardware

utilization. This work represents a significant algorithm using a single dsp block and a single

contribution in demonstrating the potential of FPGA- block ram on the fpga. International Journal of

based implementation in accelerating neural network Networking and Computing, 1(2):277–289, 2011.

prediction, and not just limiting the use of FPGA for [4.] Mohammadreza Esmali Nojehdeh, Levent Aksoy,

recognition phase. and Mustafa Altun. Effi- cient hardware

implementation of artificial neural networks using

Future work for this research includes incorporating approxi- mate multiply-accumulate blocks. In 2020

a linear feedback shift register (LFSR) to generate IEEE Computer Society Annual Symposium on

random numbers for weight initialization, processing VLSI (ISVLSI), pages 96–101, 2020.

real-time data using serial communication techniques, [5.] Simon S. Haykin. Neural networks and learning

and improving the accuracy of the hardware-based machines. Pearson Edu- cation, Upper Saddle

implementation. River, NJ, third edition, 2009.

It should be noted that almost all the recent research [6.] Albert Knebel and Dorin Patru. Educational neural

papers have focused on ANN inference with FPGAs, network development and simulation platform. In

training an ANN with FPGAs has not been well exploited 2020 St. Lawrence Section Meeting, 2020.

by the community. This is likely due to the complexity [7.] S.Y. Kung and J.N. Hwang. Digital vlsi

of designing an FPGA system that can effectively architectures for neural networks. In IEEE

pipeline the processes . The flexibility of FPGAs in terms International Symposium on Circuits and Systems,,

of integrated circuit reconfiguration provides pages 445–448 vol.1, 1989.

opportunities for implementing a wide range of [8.] Shivani Kuninti and S Rooban. Backpropagation

operations and instructions. algorithm and its hard- ware implementations: A

review. In Journal of Physics: Conference Series,

Future research in this area can explore more complex volume 1804, page 012169. IOP Publishing,

hardware architectures and improved number 2021.

representations to enhance the accuracy of hardware- based [9.] Yihua Liao. Neural networks in hardware: A

implementations. survey. Department of Com- puter Science,

University of California, 2001.

[10.] Sainath Shravan Lingala, Swanand Bedekar,

Piyush Tyagi, Purba Saha, and Priti Shahane.

Fpga based implementation of neural network. In

2022 International Conference on Advances in

Computing, Communication and Applied

Informatics (ACCAI), pages 1–5. IEEE, 2022.

IJISRT23MAY2378 www.ijisrt.com 2610

Volume 8, Issue 5, May 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

[11.] Harsh Mittal, Abhishek Sharma, and Thinagaran

Perumal. Fpga imple- mentation of handwritten

number recognition using artificial neural net-

work. In 2019 IEEE 8th Global Conference on

Consumer Electronics (GCCE), pages 1010–

1011. IEEE, 2019.

[12.] Esraa Z. Mohammed and Haitham Kareem Ali.

Hardware implementation of artificial neural network

using field programmable gate array. Interna- tional

Journal of Computer Theory and Engineering,

pages 780–783, 2013.

[13.] Syahrulanuar Ngah, Rohani Abu Bakar, Abdullah

Embong, and Saifudin Razali. Two-steps

implementation of sigmoid function for artificial

neural network in field programmable gate array.

ARPN journal of engineering and applied

sciences, 11(7):4882–4888, 2016.

[14.] R Pramodhini, Sunil S. Harakannanavar, CN

Akshay, N Rakshith, Ritwik Shrivastava, and

Akchhansh Gupta. Robust handwritten digit

recogni- tion system using hybrid artificial

neural network on fpga. In 2022 IEEE 2nd

Mysore Sub Section International Conference

(MysuruCon), pages 1–5, 2022.

[15.] L Ranganath, D Jay Kumar, and P Siva

Nagendra Reddy. Design of mac unit in artificial

neural network architecture using verilog hdl. In

2016 In- ternational Conference on Signal

Processing, Communication, Power and

Embedded System (SCOPES), pages 607–612.

IEEE, 2016.

[16.] Yudong Tao, Rui Ma, Mei-Ling Shyu, and

Shu-Ching Chen. Chal- lenges in energy-efficient

deep neural network training with fpga. In 2020

IEEE/CVF Conference on Computer Vision and

Pattern Recogni- tion Workshops (CVPRW),

pages 1602–1611, 2020.

[17.] Huynh Viet Thang. Design of artificial neural

network architecture for handwritten digit

recognition on fpga. Tp chı́ Khoa hc và Công

ngh-i hc à Nng, 2016.

[18.] Huan Minh Vo. Implementing the on-chip back

propagation learning algo- rithm on fpga

architecture. In 2017 International Conference on

System Science and Engineering (ICSSE), pages

538–541, 2017.

[19.] Isaac Westby, Xiaokun Yang, Tao Liu, and Hailu

Xu. Fpga acceleration on a multi-layer perceptron

neural network for digit recognition. The Journal

of Supercomputing, 77(12):14356–14373, 2021.

IJISRT23MAY2378 www.ijisrt.com 2611

You might also like

- UVM Harness WhitepaperDocument12 pagesUVM Harness WhitepaperSujith Paul VargheseNo ratings yet

- Using Parameterised ClassesDocument14 pagesUsing Parameterised ClassespriyajeejoNo ratings yet

- SystemVerilog Vs Verilog in RTL DesignDocument4 pagesSystemVerilog Vs Verilog in RTL DesignVijay KumarNo ratings yet

- Building Better IP With RTL Architect NoC IP Physical Exploration by ArterisDocument30 pagesBuilding Better IP With RTL Architect NoC IP Physical Exploration by Arterisyang huNo ratings yet

- 72 UVM Callbacks Vs Factory PDFDocument1 page72 UVM Callbacks Vs Factory PDFQuastnNo ratings yet

- If Systemverilog Is So Good, Why Do We Need The Uvm?: Sharing Responsibilities Between Libraries and The Core LanguageDocument7 pagesIf Systemverilog Is So Good, Why Do We Need The Uvm?: Sharing Responsibilities Between Libraries and The Core LanguageSupalShahNo ratings yet

- Installation Manual RM30110 RectifierDocument13 pagesInstallation Manual RM30110 RectifierJuan Felipe MartinezNo ratings yet

- Key Concerns For Verifying Socs: Figure 1: Important Areas During Soc VerificationDocument5 pagesKey Concerns For Verifying Socs: Figure 1: Important Areas During Soc Verificationsiddu. sidduNo ratings yet

- AXI Implementation On SoCDocument5 pagesAXI Implementation On SoCvijaykumarn12No ratings yet

- Future SoC Verification Methodology - UVM Evolution or Revolution - 2014 - Date - Verification - PanelDocument5 pagesFuture SoC Verification Methodology - UVM Evolution or Revolution - 2014 - Date - Verification - PanelSam HoneyNo ratings yet

- Verification of AHB Protocol For AHB-Wishbone Bridge Using SystemVerilogDocument4 pagesVerification of AHB Protocol For AHB-Wishbone Bridge Using SystemVerilogEditor IJRITCCNo ratings yet

- Challenges of SoCc VerificationDocument6 pagesChallenges of SoCc Verificationprodip7No ratings yet

- CummingsSNUG2014AUS UVM MessagesDocument33 pagesCummingsSNUG2014AUS UVM MessagesKalpana ChaudharyNo ratings yet

- SV-UVM of AXI - WBDocument4 pagesSV-UVM of AXI - WBRahul HanaNo ratings yet

- The Missing Link: The Testbench To Dut Connection: David Rich - Mentor A Siemens BusinessDocument10 pagesThe Missing Link: The Testbench To Dut Connection: David Rich - Mentor A Siemens BusinessMarko NedicNo ratings yet

- Advanced Verilog CodingDocument76 pagesAdvanced Verilog Coding1234GAURAVNo ratings yet

- Vlsi Project Report Arithmetic Logical Unit Using Verilog AluDocument7 pagesVlsi Project Report Arithmetic Logical Unit Using Verilog AluAnurag KharwarNo ratings yet

- Missing Link: Testbench To DUT Connection by David RichDocument9 pagesMissing Link: Testbench To DUT Connection by David RichapninexNo ratings yet

- SystemVerilog PDFDocument7 pagesSystemVerilog PDFFrank ObrienNo ratings yet

- FSM in SV-classDocument13 pagesFSM in SV-classkunaraj75% (4)

- AXI Stream ProtocolDocument8 pagesAXI Stream ProtocolshwetabhagatNo ratings yet

- Design Verification Engineer RTL in Austin TX Resume Sumaira KhowajaDocument3 pagesDesign Verification Engineer RTL in Austin TX Resume Sumaira KhowajaSumairaKhowaja4No ratings yet

- I 2 CDocument16 pagesI 2 CelumalaianithaNo ratings yet

- Verification Approach For ASIC Generic IP Functional VerificationDocument3 pagesVerification Approach For ASIC Generic IP Functional VerificationMohammad Seemab AslamNo ratings yet

- Cadence TLM WPDocument9 pagesCadence TLM WPcoolpartha25No ratings yet

- C++ Question BankDocument11 pagesC++ Question BankYash PawarNo ratings yet

- UVM Usage For Dynamic Reconfiguration of Complex Designs DVCon India 2014 PaperDocument12 pagesUVM Usage For Dynamic Reconfiguration of Complex Designs DVCon India 2014 PaperKunal PanchalNo ratings yet

- SystemVerilog Assertions Handbook, 4th Edition - Functional Covergae PagesDocument5 pagesSystemVerilog Assertions Handbook, 4th Edition - Functional Covergae PagesSam HoneyNo ratings yet

- Can Protocol Uvm PDFDocument5 pagesCan Protocol Uvm PDFMayank JaiswalNo ratings yet

- Dokumen - Tips Verilog BasicDocument151 pagesDokumen - Tips Verilog BasicGuru VelmathiNo ratings yet

- Object Oriented Programming Using C Plus PlusDocument223 pagesObject Oriented Programming Using C Plus PlusMonmohan BordoloiNo ratings yet

- RTL Verification and FPGA Implementation of Vedic Multiplier.Document7 pagesRTL Verification and FPGA Implementation of Vedic Multiplier.Achyut KonsagarNo ratings yet

- System Generator TutorialDocument33 pagesSystem Generator TutorialAthul KsNo ratings yet

- Verilog HDL Training GuideDocument67 pagesVerilog HDL Training GuideSreekanth PagadapalliNo ratings yet

- 2005-SNUG-Paper SystemVerilog Unique and PriorityDocument24 pages2005-SNUG-Paper SystemVerilog Unique and Priorityvaibhav27aprilNo ratings yet

- Verilog Gotchas Part1Document63 pagesVerilog Gotchas Part1Anish JosephNo ratings yet

- Riscv SpecDocument236 pagesRiscv SpecAshwini PatilNo ratings yet

- RTL Design Verification Engineer in Austin TX Resume Sumaira KhowajaDocument3 pagesRTL Design Verification Engineer in Austin TX Resume Sumaira KhowajaSumairaKhowaja3No ratings yet

- Lab 1Document4 pagesLab 1sharmanator99No ratings yet

- Interview Question UsefullinkDocument3 pagesInterview Question UsefullinkMOHAMMED JUNED RAHINo ratings yet

- CummingsSNUG2016SV SVLogicProcsDocument32 pagesCummingsSNUG2016SV SVLogicProcsspaulsNo ratings yet

- 2012 DVCon SystemVerilog 2012 PaperDocument13 pages2012 DVCon SystemVerilog 2012 Papercoolkad81No ratings yet

- Final Test: Part - ADocument5 pagesFinal Test: Part - Akavya devulapalliNo ratings yet

- Xge Mac SpecDocument24 pagesXge Mac Spechackdrag100% (1)

- Verilog Interview Questions 1670709109Document21 pagesVerilog Interview Questions 1670709109Ali MuhammadNo ratings yet

- DesignWS P1 PDFDocument673 pagesDesignWS P1 PDFcaubehamchoi6328No ratings yet

- Mediotek Health Systems PVT Ltd. ChennaiDocument2 pagesMediotek Health Systems PVT Ltd. ChennaiMuraliNo ratings yet

- Senior Design Verification Engineer in Phoenix AZ Resume Lloyd HeathDocument3 pagesSenior Design Verification Engineer in Phoenix AZ Resume Lloyd HeathLloyd HeathNo ratings yet

- Real-Time System AssignmentDocument4 pagesReal-Time System AssignmentTommy Kyen'de BilNo ratings yet

- EC551-Fall-2011 Advanced Digital Design With Verilog & FPGAsDocument4 pagesEC551-Fall-2011 Advanced Digital Design With Verilog & FPGAsApoorva BhattNo ratings yet

- Ece5950 Tut4 Vcs GLDocument5 pagesEce5950 Tut4 Vcs GLVijay Kumar NNo ratings yet

- Lec4 VerilogDocument58 pagesLec4 VerilogRohit BhelkarNo ratings yet

- FPGA Applications in SpaceDocument5 pagesFPGA Applications in SpacePiyush JainNo ratings yet

- Implementing Communication Bridge Between I2C and APBDocument4 pagesImplementing Communication Bridge Between I2C and APBDon RajuNo ratings yet

- Sewp Zc413 Computer Organization & ArchitectureDocument16 pagesSewp Zc413 Computer Organization & Architectureshravanr500No ratings yet

- Anti FuseDocument2 pagesAnti FuseRahmatullah JatoiNo ratings yet

- IHI0024C Amba Apb Protocol SpecDocument28 pagesIHI0024C Amba Apb Protocol SpecAnil KumarNo ratings yet

- Interfacing and Some Common Building Blocks: Coe 111: Advanced Digital DesignDocument35 pagesInterfacing and Some Common Building Blocks: Coe 111: Advanced Digital DesignFrancisNo ratings yet

- VHDL Coding Tips and TricksDocument209 pagesVHDL Coding Tips and TricksvinutaNo ratings yet

- Cummings Why Use Classes For UVM TransactionsDocument2 pagesCummings Why Use Classes For UVM Transactionsvishwalatha sNo ratings yet

- Application of Game Theory in Solving Urban Water Challenges in Ibadan-North Local Government Area, Oyo State, NigeriaDocument9 pagesApplication of Game Theory in Solving Urban Water Challenges in Ibadan-North Local Government Area, Oyo State, NigeriaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Exploring the Post-Annealing Influence on Stannous Oxide Thin Films via Chemical Bath Deposition Technique: Unveiling Structural, Optical, and Electrical DynamicsDocument7 pagesExploring the Post-Annealing Influence on Stannous Oxide Thin Films via Chemical Bath Deposition Technique: Unveiling Structural, Optical, and Electrical DynamicsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Osho Dynamic Meditation; Improved Stress Reduction in Farmer Determine by using Serum Cortisol and EEG (A Qualitative Study Review)Document8 pagesOsho Dynamic Meditation; Improved Stress Reduction in Farmer Determine by using Serum Cortisol and EEG (A Qualitative Study Review)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Detection of Phishing WebsitesDocument6 pagesDetection of Phishing WebsitesInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Study to Assess the Knowledge Regarding Teratogens Among the Husbands of Antenatal Mother Visiting Obstetrics and Gynecology OPD of Sharda Hospital, Greater Noida, UpDocument5 pagesA Study to Assess the Knowledge Regarding Teratogens Among the Husbands of Antenatal Mother Visiting Obstetrics and Gynecology OPD of Sharda Hospital, Greater Noida, UpInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Impact of Music on Orchid plants Growth in Polyhouse EnvironmentsDocument5 pagesThe Impact of Music on Orchid plants Growth in Polyhouse EnvironmentsInternational Journal of Innovative Science and Research Technology100% (1)

- Sustainable Energy Consumption Analysis through Data Driven InsightsDocument16 pagesSustainable Energy Consumption Analysis through Data Driven InsightsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Esophageal Melanoma - A Rare NeoplasmDocument3 pagesEsophageal Melanoma - A Rare NeoplasmInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Vertical Farming System Based on IoTDocument6 pagesVertical Farming System Based on IoTInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Mandibular Mass Revealing Vesicular Thyroid Carcinoma A Case ReportDocument5 pagesMandibular Mass Revealing Vesicular Thyroid Carcinoma A Case ReportInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Influence of Principals’ Promotion of Professional Development of Teachers on Learners’ Academic Performance in Kenya Certificate of Secondary Education in Kisii County, KenyaDocument13 pagesInfluence of Principals’ Promotion of Professional Development of Teachers on Learners’ Academic Performance in Kenya Certificate of Secondary Education in Kisii County, KenyaInternational Journal of Innovative Science and Research Technology100% (1)

- Consistent Robust Analytical Approach for Outlier Detection in Multivariate Data using Isolation Forest and Local Outlier FactorDocument5 pagesConsistent Robust Analytical Approach for Outlier Detection in Multivariate Data using Isolation Forest and Local Outlier FactorInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Realigning Curriculum to Simplify the Challenges of Multi-Graded Teaching in Government Schools of KarnatakaDocument5 pagesRealigning Curriculum to Simplify the Challenges of Multi-Graded Teaching in Government Schools of KarnatakaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Review on Childhood Obesity: Discussing Effects of Gestational Age at Birth and Spotting Association of Postterm Birth with Childhood ObesityDocument10 pagesReview on Childhood Obesity: Discussing Effects of Gestational Age at Birth and Spotting Association of Postterm Birth with Childhood ObesityInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Entrepreneurial Creative Thinking and Venture Performance: Reviewing the Influence of Psychomotor Education on the Profitability of Small and Medium Scale Firms in Port Harcourt MetropolisDocument10 pagesEntrepreneurial Creative Thinking and Venture Performance: Reviewing the Influence of Psychomotor Education on the Profitability of Small and Medium Scale Firms in Port Harcourt MetropolisInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Designing Cost-Effective SMS based Irrigation System using GSM ModuleDocument8 pagesDesigning Cost-Effective SMS based Irrigation System using GSM ModuleInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Detection and Counting of Fake Currency & Genuine Currency Using Image ProcessingDocument6 pagesDetection and Counting of Fake Currency & Genuine Currency Using Image ProcessingInternational Journal of Innovative Science and Research Technology100% (9)

- Ambulance Booking SystemDocument7 pagesAmbulance Booking SystemInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Utilization of Waste Heat Emitted by the KilnDocument2 pagesUtilization of Waste Heat Emitted by the KilnInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Impact of Stress and Emotional Reactions due to the Covid-19 Pandemic in IndiaDocument6 pagesImpact of Stress and Emotional Reactions due to the Covid-19 Pandemic in IndiaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- An Overview of Lung CancerDocument6 pagesAn Overview of Lung CancerInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Digital Finance-Fintech and it’s Impact on Financial Inclusion in IndiaDocument10 pagesDigital Finance-Fintech and it’s Impact on Financial Inclusion in IndiaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Auto Tix: Automated Bus Ticket SolutionDocument5 pagesAuto Tix: Automated Bus Ticket SolutionInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- An Efficient Cloud-Powered Bidding MarketplaceDocument5 pagesAn Efficient Cloud-Powered Bidding MarketplaceInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Effect of Solid Waste Management on Socio-Economic Development of Urban Area: A Case of Kicukiro DistrictDocument13 pagesEffect of Solid Waste Management on Socio-Economic Development of Urban Area: A Case of Kicukiro DistrictInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Forensic Advantages and Disadvantages of Raman Spectroscopy Methods in Various Banknotes Analysis and The Observed Discordant ResultsDocument12 pagesForensic Advantages and Disadvantages of Raman Spectroscopy Methods in Various Banknotes Analysis and The Observed Discordant ResultsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Comparative Evaluation of Action of RISA and Sodium Hypochlorite on the Surface Roughness of Heat Treated Single Files, Hyflex EDM and One Curve- An Atomic Force Microscopic StudyDocument5 pagesComparative Evaluation of Action of RISA and Sodium Hypochlorite on the Surface Roughness of Heat Treated Single Files, Hyflex EDM and One Curve- An Atomic Force Microscopic StudyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Examining the Benefits and Drawbacks of the Sand Dam Construction in Cadadley RiverbedDocument8 pagesExamining the Benefits and Drawbacks of the Sand Dam Construction in Cadadley RiverbedInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Predictive Analytics for Motorcycle Theft Detection and RecoveryDocument5 pagesPredictive Analytics for Motorcycle Theft Detection and RecoveryInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Computer Vision Gestures Recognition System Using Centralized Cloud ServerDocument9 pagesComputer Vision Gestures Recognition System Using Centralized Cloud ServerInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Mushroom Big hat: Ready toy size is 11 смDocument12 pagesMushroom Big hat: Ready toy size is 11 смJulia PankovaNo ratings yet

- Print Datagridview ClassDocument10 pagesPrint Datagridview Classinfo.glcom5161No ratings yet

- Water Mist Fire Suppression: How Less Water Has A Big ImpactDocument6 pagesWater Mist Fire Suppression: How Less Water Has A Big ImpactSI Comércio e ServiçosNo ratings yet

- Small Office Home Office (SOHO) IT Network SetupDocument104 pagesSmall Office Home Office (SOHO) IT Network SetupdeeNo ratings yet

- Trevor Savelkoul: EducationDocument2 pagesTrevor Savelkoul: Educationapi-531503111No ratings yet

- Design Process For Completion and WorkoversDocument72 pagesDesign Process For Completion and WorkoversDianaEstefaniaValdez100% (1)

- Khoa Ngoại Ngữ Listening Test Đề Thi Chuẩn Đầu Ra A2 Môn thi: Kỹ năng nghe Thời gian làm bài: 25 phútDocument8 pagesKhoa Ngoại Ngữ Listening Test Đề Thi Chuẩn Đầu Ra A2 Môn thi: Kỹ năng nghe Thời gian làm bài: 25 phútHT EmmanuelNo ratings yet

- Air Law 3Document56 pagesAir Law 3Duarte NanquesNo ratings yet

- Fitting Threads PDFDocument1 pageFitting Threads PDFasdNo ratings yet

- Alimentary SystemDocument51 pagesAlimentary SystemKavivarma Raj RajendranNo ratings yet

- Francis Peteros Drug Study 2 BetamethasoneDocument8 pagesFrancis Peteros Drug Study 2 BetamethasoneFrancis PeterosNo ratings yet

- RMC Plant Calibration ReportDocument5 pagesRMC Plant Calibration ReportSurendra kumar50% (2)

- HSE-026.Pre-entry Hydra CraneDocument2 pagesHSE-026.Pre-entry Hydra CraneMithlesh Singh80% (5)

- BemorailDocument19 pagesBemorailAulia OkkyNo ratings yet

- CSRF 1 (CPF) FormDocument4 pagesCSRF 1 (CPF) FormJack Lee100% (1)

- BSBMKG541 Project Portfolio V2.0Document5 pagesBSBMKG541 Project Portfolio V2.0Chutamas PhuthatngaNo ratings yet

- Flow Cytometry-Clinical ApplicationsDocument5 pagesFlow Cytometry-Clinical ApplicationscandiddreamsNo ratings yet

- Revised PODocument10 pagesRevised POnisha_khanNo ratings yet

- Byk 160Document2 pagesByk 160iimran_ahmad100% (1)

- Death Reports From VAERSDocument311 pagesDeath Reports From VAERSRichards Keith100% (1)

- Calculation of Wind Forces On BuildingsDocument11 pagesCalculation of Wind Forces On Buildingsකණිෂ්ක ගුණසේකරNo ratings yet

- Eva Karene Romero (Auth.) - Film and Democracy in Paraguay-Palgrave Macmillan (2016)Document178 pagesEva Karene Romero (Auth.) - Film and Democracy in Paraguay-Palgrave Macmillan (2016)Gabriel O'HaraNo ratings yet

- AcknowledgementDocument5 pagesAcknowledgementWensleyBrionesFajardoNo ratings yet

- Cca 1 12Document31 pagesCca 1 12Lorenzo Luiz Bahian CruzNo ratings yet

- Dell Precision T7600 Spec Sheet 2Document2 pagesDell Precision T7600 Spec Sheet 2Bms Ag100% (1)

- Anatomy of The Eye - Dr. Lim-Cecilio (2022)Document3 pagesAnatomy of The Eye - Dr. Lim-Cecilio (2022)Patricia ManaliliNo ratings yet

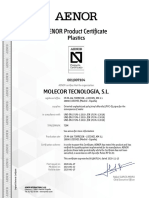

- AENOR Product Certificate: PlasticsDocument2 pagesAENOR Product Certificate: PlasticsEliud RodriguezNo ratings yet

- Tape RecorderDocument26 pagesTape RecorderSunnyKumar100% (1)

- Chuyên sâu tiếng anh 4 Cv2345 UNIT 11Document8 pagesChuyên sâu tiếng anh 4 Cv2345 UNIT 11kimhangsunriseNo ratings yet